How is LiDAR different from radar and camera-based systems?

LiDAR and radar are both used to determine the velocity, range, and angle of moving objects. Radar uses radio waves instead of light, whereas cameras rely on millions of pixels or megabytes to process a 2D image.

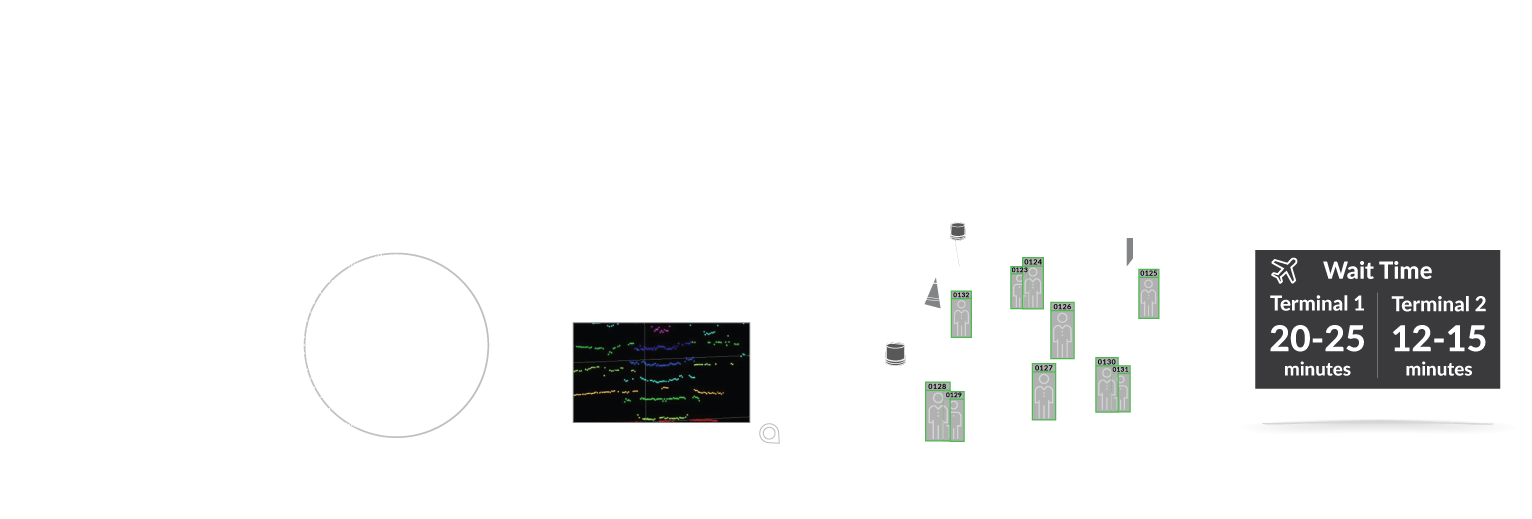

Unlike radar, LiDAR can provide a full real-time 3D image of the world around it. Moreover, unlike cameras, LiDAR provides no PII (Personally Identifying Information) risk and a lower rate of false alarm. LiDAR creates an image of the target at the same time as it determines the object’s distance, thus providing a 3D view of the object and a precise calculation of the direction in which it is moving—something neither cameras nor radar can provide.

Furthermore, neither radar or cameras can see accurately in the dark or through weather conditions such as rain or snow, which substantially limit their “sight” capabilities. LiDAR can also provide surface measurements and a precise resolution of objects within a certain range.